SentiaSys applauds NVidia’s commitment to advancing AI in the gaming industry. As a global leader and one of the most valuable companies in the world, their expertise and resources set them apart, making direct competition unrealistic. However, two key points are clear:

- NVidia’s full-scale investment underscores the immense growth potential of this sector; and

- the vast scope of opportunities and the transformative impact on AI technology leaves room for specialized niches outside the core focus of NVidia’s business model.

One of SentiaSys’ core aspirations is to break free from reliance on cloud-based technology, a path that seems contrary to the direction NVidia is pursuing. Only time will reveal how customers respond, but the gaming world is vast, and not everyone will flock to the biggest, wealthiest game developers for their entertainment. There’s a thriving market for small developers and indie games that could significantly benefit from the thoughtful application of AI—and that’s precisely where our focus lies.

In the meantime, for those interested, here’s some information that Grok summarized about NVidia ACE:

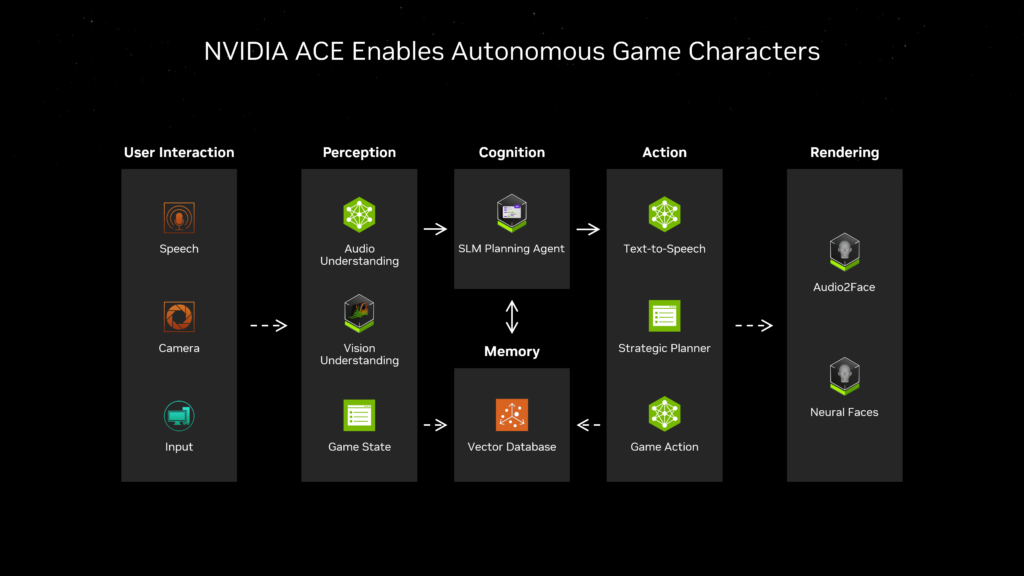

NVIDIA’s Avatar Cloud Engine (ACE) is a suite of generative AI technologies designed to bring digital characters—particularly non-playable characters (NPCs) in games and virtual avatars in applications—to life with human-like intelligence, behavior, and expressiveness.

https://www.nvidia.com/en-us/geforce/news/nvidia-ace-autonomous-ai-companions-pubg-naraka-bladepoint

Introduced in 2023 at COMPUTEX, ACE has evolved rapidly, with significant updates showcased at events like CES 2025, positioning it as a game-changer for interactive experiences. It’s not a single large language model (LLM) but a modular framework integrating various AI microservices, optimized to run on NVIDIA’s RTX GPUs, both in the cloud and on-device. Here’s a deeper dive into what ACE is, how it works, and its implications.

What Is the ACE Framework?

ACE is essentially a “foundry” for creating intelligent, dynamic digital characters. It combines multiple AI models and tools to handle different aspects of character behavior:

- Speech and Conversation: Natural language understanding and generation, enabling unscripted, context-aware dialogue.

- Animation: Real-time facial and body animations synced with audio or emotional states.

- Perception and Cognition: Multimodal capabilities allowing characters to “see,” “hear,” and reason about their environment.

- Action: Decision-making and planning, letting characters act autonomously in response to players or surroundings.

The framework is built on NVIDIA’s Unified Compute Framework (UCF) and leverages technologies like NVIDIA NeMo (for language models), Riva (for speech recognition and synthesis), and Audio2Face (for animation), all packaged as NVIDIA Inference Microservices (NIMs). These microservices are customizable, scalable, and deployable across cloud, data centers, or local RTX-powered PCs, making ACE accessible to developers of games, tools, and middleware.

Key Components and How They Work

- Language and Dialogue (NeMo):

- ACE uses small language models (SLMs) like Nemotron-4 4B Instruct and Mistral-NeMo-Minitron variants (2B, 4B, 8B parameters). These are fine-tuned for low-latency, instruction-following tasks, such as role-playing or responding to player queries.

- Example: In the game Mecha BREAK, a mechanic NPC can answer questions about mech customization using Nemotron-4, running on-device for real-time interaction.

- Features like Retrieval-Augmented Generation (RAG) allow characters to pull context-specific info (e.g., game lore) into responses, enhancing relevance.

- Speech Processing (Riva):

- Automatic Speech Recognition (ASR) transcribes player speech (e.g., via microphone), while Text-to-Speech (TTS) generates character voices.

- Supports multilingual capabilities and low-latency conversation, crucial for immersive dialogue.

- In the Kairos demo (with Convai), Riva enabled an NPC named Jin to listen and respond naturally to spoken input.

- Animation (Audio2Face):

- Audio2Face generates 3D facial animations from audio input, syncing lip movements and expressions in real time. The Audio2Face-3D NIM, updated in 2024, runs locally on RTX GPUs, reducing latency.

- Used in games like S.T.A.L.K.E.R. 2 and Fort Solis for lifelike NPC expressions, and in Mecha BREAK for mechanic interactions.

- Perception and Multimodal Input:

- Newer ACE models (e.g., NemoVision-4B-Instruct) integrate vision and audio, letting characters perceive their environment or real-world inputs via cameras or game states.

- Example: In Perfect World Games’ Legends demo, the character Yun Ni uses ChatGPT-4o vision to identify objects or people, adding an AR-like layer to gameplay.

- Autonomous Behavior (Cognition and Action):

- Introduced at CES 2025, ACE now supports “autonomous game characters” that perceive, plan, and act like humans. SLMs like Mistral-NeMo-Minitron-8B handle high-frequency decision-making (e.g., 30-60 Hz, matching game loops).

- In PUBG: BATTLEGROUNDS, AI teammates adapt to player tactics; in MIR5, bosses evolve strategies based on player behavior.

Evolution and Updates

- 2023 (Launch): Debuted with the Kairos demo, showing an NPC (Jin) in a ramen shop responding to unscripted dialogue with facial animations, powered by NeMo, Riva, and Audio2Face.

- 2024 (Gamescom): Mecha BREAK became the first game to showcase on-device ACE, with Nemotron-4 and Audio2Face-3D enhancing NPC interactions.

- 2025 (CES): Expanded to autonomous characters with perception (NemoVision-4B) and cognition (Mistral-NeMo SLMs), demoed in titles like inZOI and NARAKA: BLADEPOINT. Introduced large-context SLMs (128k tokens) for complex reasoning.

Technical Details

- Hardware: Optimized for NVIDIA RTX GPUs (e.g., RTX 4090 can run multiple SLMs simultaneously). The smallest SLM, Minitron-2B, fits in 1.5GB VRAM, making it viable for consumer PCs.

- Microservices: Packaged as NIMs, these are lightweight, containerized AI models deployable via NVIDIA AI Enterprise or locally. Examples include Parakeet-CTC for multilingual ASR and Audio2Emotion for emotional animation cues.

- Integration: Plugins for Unreal Engine 5 and Autodesk Maya (released 2024) simplify embedding ACE into workflows. The Unreal Engine sample project shows how to animate MetaHuman characters with ACE outputs.

Applications

- Gaming: NPCs in Mecha BREAK advise on mech builds, while inZOI’s “Smart Zoi” (using a 0.5B parameter SLM) offers dynamic companions. MIR5 features adaptive AI bosses.

- Digital Assistants: NVIDIA Tokkio, a reference workflow, uses ACE for customer service avatars in retail or healthcare, blending speech, animation, and RAG.

- Virtual Worlds: Convai’s platform leverages ACE for NPCs that converse among themselves, pick up objects, or guide players, as seen in the updated Kairos demo.

Implications and Potential

- Immersion: NPCs move beyond scripted responses to dynamic, personality-driven interactions, evolving based on player actions (e.g., a PUBG teammate learning your playstyle).

- Development: Reduces the need for pre-written dialogue or animation, though it requires integrating multiple systems, which NVIDIA simplifies with SDKs and samples.

- Future: Multimodal SLMs hint at characters that could “see” real-world players or react to ambient sounds, blurring lines between virtual and physical.

Limitations

- Not Fully Autonomous: While advanced, ACE characters rely on predefined game states or developer-tuned models—they don’t “feel” or learn spontaneously like humans.

- Complexity: Combining perception, cognition, and action demands significant GPU power and developer expertise, though on-device SLMs mitigate latency.

- Scope: Current demos focus on specific use cases (e.g., dialogue, combat); broader open-world autonomy is still emerging.

Conclusion

NVIDIA’s ACE framework is a leap toward lifelike digital characters, blending generative AI with real-time animation and perception. It’s not just about smarter NPCs—it’s about redefining interactivity in games and beyond. With ongoing partnerships (e.g., Tencent, Ubisoft) and tools like Unreal Engine plugins, ACE is poised to become a standard for next-gen experiences. If you’re curious about a specific game or technical aspect, let me know—I can zoom in further!

Leave a Reply